Davinci resolve gpu memory full11/9/2023  They have no control on anything else because nvidia code is a black box. You don't seem to get it that the developers can only choose from "crappy" "crappier" and "crappiest" settings. Just like some developers are forced to use TAA, same will happen with VRS. No they don't, the nvidia implementation does. So, instead of having a full quality image you get some blurry garbage mess and you're telling me ''the developers decide that". Just as you don’t decide on the implementation of Resolves color engine, bmd does. User isn’t deciding anything anyway, with or without VRS, game/application devs are. Same way you could say amd is crappy because they support opengl that isn’t physical multispectral path tracing framework but crappy fake shading. Which way it relates to nvidia being crappy i don’t get. VRS does not randomly decide it, it is an additional method that developers can use and control if they want to. I'd like to decide this thing with my crappy evolutionary invention (like you put it).my eyes.not some random algorithm. Variable Rate Shading (VRS) will decide which part of the frame is detailed and which one will have way lower details. IvanovS wrote:I think you're confusing things. Hence I wouldn't be surprised if their memory management is not as efficient as AMDs.(Not efficient = not as good in everything else but games).

Notice I said DLSS VRS are not related to Resolve but related to Nvidia cutting corners and bringing performance at the expense of image quality / raw power. Those are features that are optionally can be implemented by the developers.

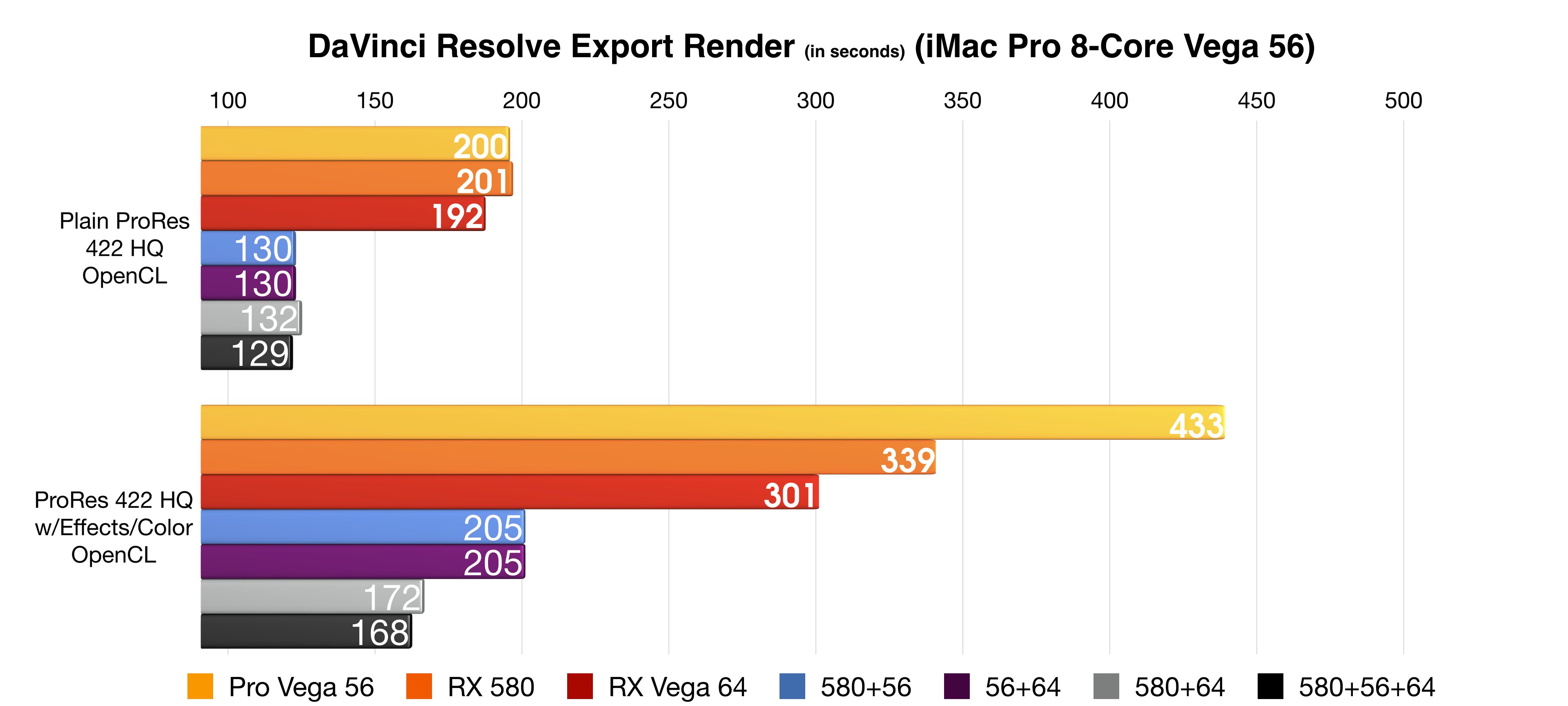

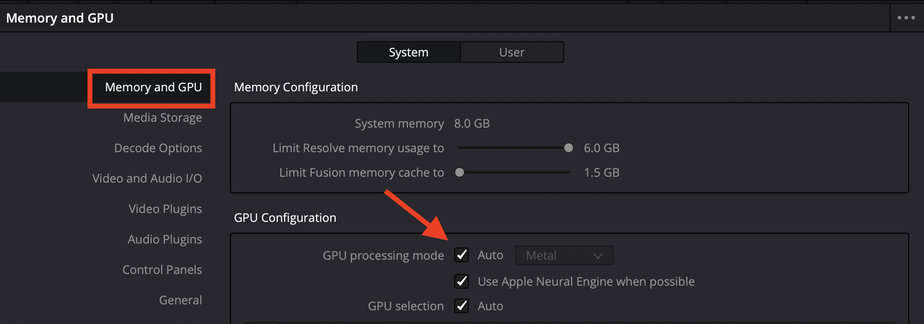

I agree this is likely a BM DR + NVidia issue, I'm not sure how "DLSS, VRS and so on" applies to this. Nvidia cuts a lot of corners to gain max performance (not talking about resolve here but games and such) like extreme delta color compression, dlss, vrs and so on so I wouldn't be surprised if that's just how memory works on nvidia cards. Same end result, different stuff behind the scenes.Īlso I really doubt its a Resolve issue. Get something like hwinfo64 for accurate measurements.Īlso AMD doesn't have cuda cores they have stream processors. Windows Task Manager is not an accurate measurement tool. I think a 16GB card with 5000+ CUDA cores could be a great shout over the 3080 which has 10GB and 8000 CUDA cores. It seems that 8GB SHOULD be enough with 4k footage but perhaps Resolve is simply not utilising it correctly and maybe it just needs optimisation.Įither way it sounds like waiting for Big Navi would be wise to see what they offer. To confirm when I am getting the "GPU memory full" message task manager says I am only actually using approx 5-6GB of VRAM so this seems to tie in with one of the comments above. Competition will be good for us consumers. The first are scheduled for 2nd half of 2020 and the Intel Xe for 1st half of 2021. We are all waiting for the next gen AMD High END Navi and also for the coming Intel Xe Graphic Cards. Higher CUDA/OpenCL performance gives a faster Resolve. The other factor is the CUDA/OpenCL performance. I still remember people with GTX 1080 TI's with 11 GB of vRam reporting GPU memory full errors, while using Noise Reduction or other Resolve functions, that simultaneous use data from two or more video frames. For 4K is the absolute minimum 6 GB of vRam, but minimum 8 GB of vRam or more are recommended. There are two factors that is important for the Graphics card.

Resolve does all its image processing in the GPU on the graphics card. In Resolve the CPU is used to run the app, disk I/O, fusion and compression and decompression of codecs.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed